Breaking realtime records with The Washington Post election coverage

How The Washington Post broke realtime records by using WebSockets to deliver the 2020 US Presidential Election results to global readers.

Introduction

On Wednesday 4th November, The Washington Post broke Pusher records as they delivered realtime results coverage of one of their largest news events to date. The 2020 US presidential election was watched worldwide and The Washington Post served live updates to global browsers, at peak times sending over 1 million messages per second.

We spoke to the team behind their election pages about why having flawless realtime delivery was vital for the event and how they used Pusher Channels to build the engine for their live results.

Jeremy Bowers, Director of Engineering, is responsible for the research and development team brought together to experiment with new technologies for the election effort. Aware that they would be serving potentially their largest election audience on record, the team had two major hurdles to overcome which would be the making of the event: speed and reliability.

“We’d already built these pages which were very lightweight and fast, but we needed a way to get data to people as election results are updating. That was really the hard problem.” Jeremy told us.

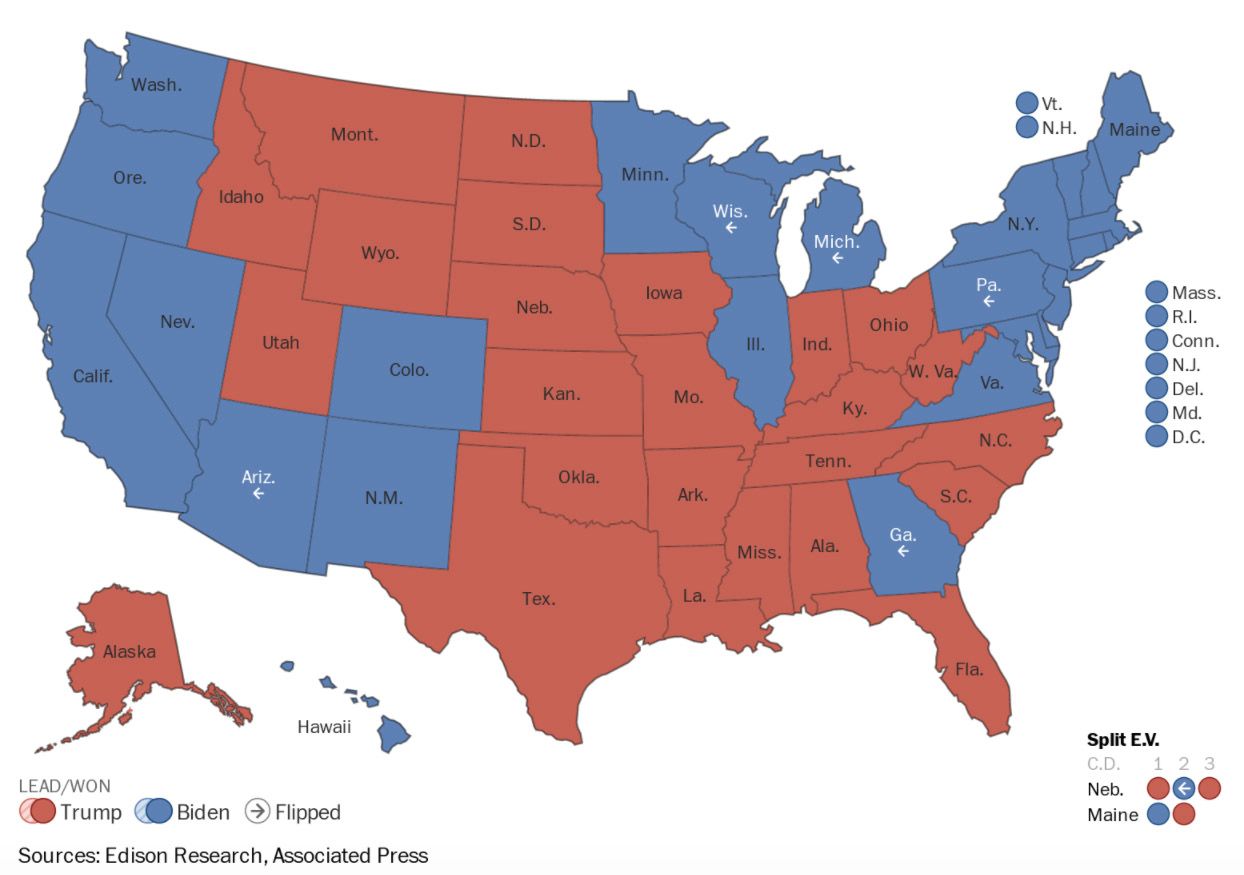

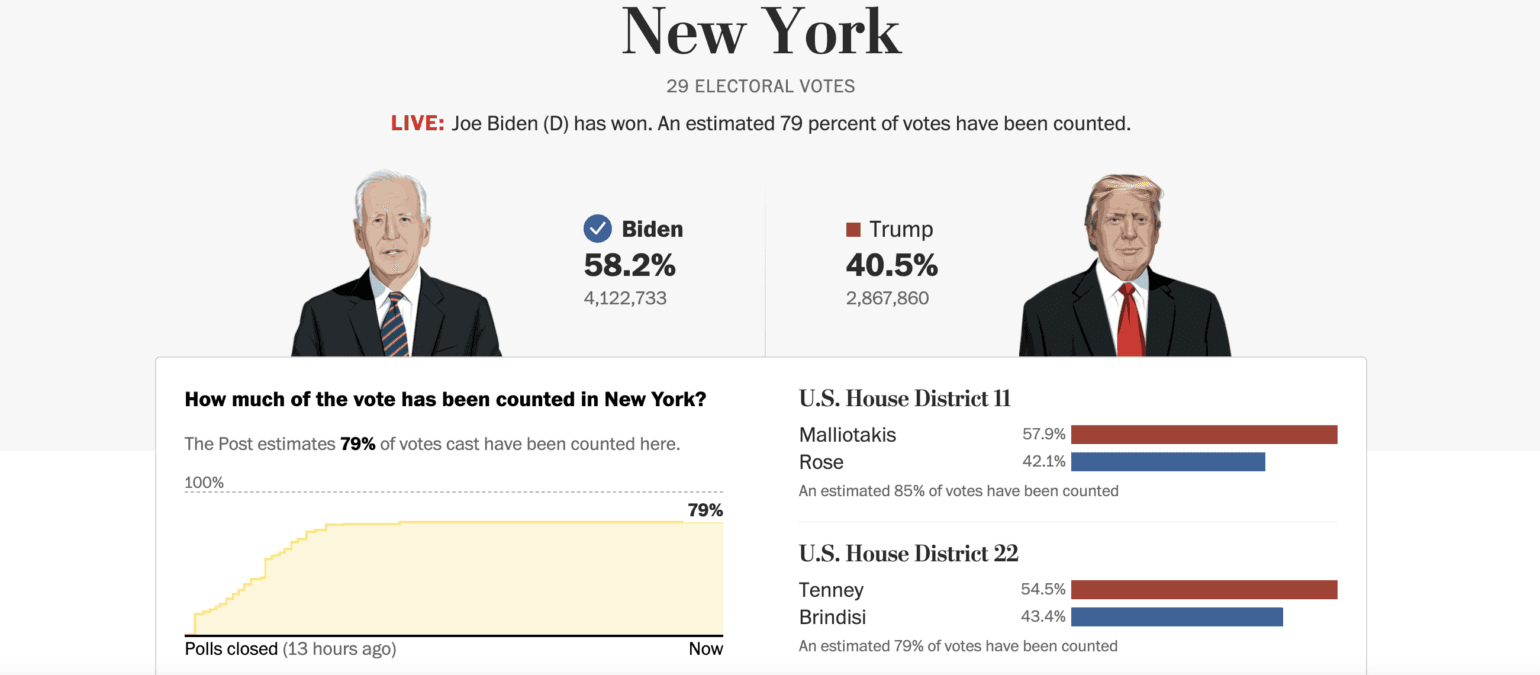

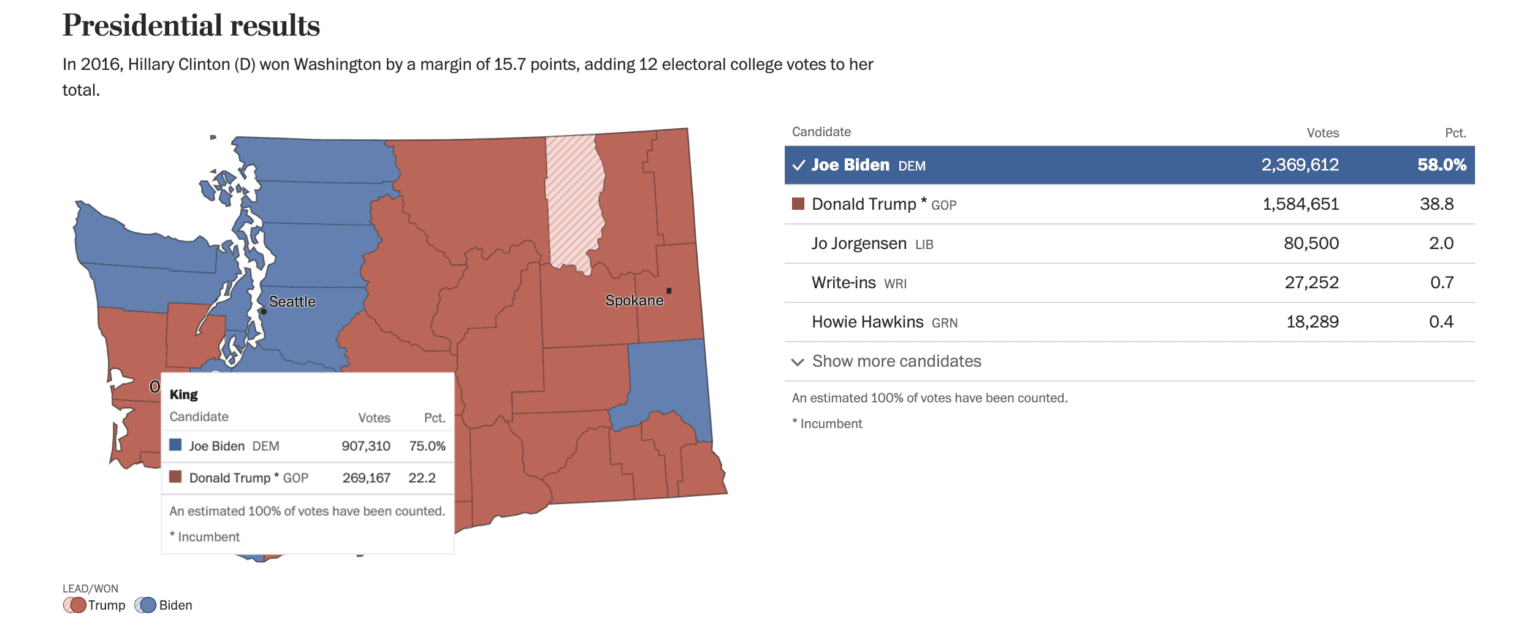

Modern news coverage is all about speed. Reporters and data teams work closely with technical teams to bring a sense of omniscience to their events. A multitude of sources must be fed into systems and delivered to live dashboards which provide an automated realtime pulse of information to readers. For an event as significant as the 2020 presidential election, keeping the data feed live and updates as close to instant as possible was crucial. With a majority audience coming to the pages on mobile web browsers, feeding the data from every race in the country at realtime speed was an immense task.

“Think about the page that you have operating on your phone: this tiny little map of the US, and underneath it you have a tiny little US senate map and underneath that you have a little grid of the house, which is 435 house seats. We have to feed all of it. It’s every state – twice, because we need it once for the presidency and once for the senate – and we need all of the data for the houses. It’s just a giant pile of data.”

The team knew that using a JavaScript poll to prompt AJAX data loads at intervals of a few seconds would be an inefficient method due to their extremely granular data requirements; not to mention being a disaster for performance, particularly on the mobile web.

“It’s really bad for the DOM on the phone. That makes the phone feel slow and janky after maybe five minutes and then the other problem is that it moves a lot of data. The bandwidth on these devices is seriously limited and we have a lot of empathy for our customers who are coming to us on devices that are maybe a little older. It’s not like every Washington Post subscriber has whatever the newest, finest device is and they’re not all on 5G networks. It’s our job to get them data fast. Even if they happen to be on a network or a phone that’s not particularly fast.”

Following testing of a few methods, their conclusion was to tackle the event by building a WebSocket implementation which pushed a massive number of atomic-level updates at almost instant speed across the web.

“We tested a solution where we hosted a Socket server. We tested with Socket.io – and it was fine. I didn’t hate it at all.” With the test having been used on a much smaller scale for the Virginia General Election in 2019 however, the constraints of scaling their own maiden implementation became apparent very quickly: “Scaling a WebSocket implementation isn’t fun.”

“We definitely could have built something like this but we wouldn’t have tested it nearly as much. We need our engineers on the features that we don’t have a trusted partner for. We need to build these data visualisations and those require the same engineers who would be working on implementing a WebSocket implementation or working on scaling our server. We would rather put our time into something that is a novel reader feature, like an election night model or our fancy maps. So we would rather partner with somebody on the stuff where other people have expertise.”

Enter Pusher Channels.

Jeremy was familiar with Channels from previous projects and was very confident in the capabilities of the API to handle their use case, as well as the resources available to the 60 strong team involved in the implementation for both the election pages and the homepage. “The docs are great. Literally everything’s great. The developer experience for us was fantastic. That was one of the strengths of it too: we build our own, we don’t have the documentation, we don’t have API snippets, we have to write all of that ourselves. When we’re working with Pusher not only do we have the trusted infrastructure partnership, we also have all of the documentation, the snippets, the client side library which has fallbacks and stuff. That’s nice. It just makes life a lot easier.”

The final implementation met the speed requirement effortlessly. Though a Slack channel had been set up to alert editors as soon as updates came in, those watching the results pages had their information first. The WebSocket update was faster: “You would see a WebSocket come in and then it would take an extra beat or two and the Slack message would show up.”

Jeremy’s team, responsible for building and maintaining the individual election race pages, worked closely with The Washington Post’s large site team to deploy combinations of their modules to the homepage and main election landing page.

“The hardest implementation was not on our pages, which was easy. You get the client on the page, do some testing, a little debouncing for events and we’re good to go. The hardest part for us was our homepage implementation. We needed an unknown number of modules on the page that relied on Pusher data, so we needed to know what channels to subscribe to. It was slightly tricky to figure out how every individual module could request the channel it needed so the page only had to subscribe to a single socket with multiple channels subscribed. But once we got that little logic bit filled out it was easy.”

An earlier socket implementation tested for the election primaries had used multiple iframes of units on the homepage with a dedicated Socket for each iframe, but the solution turned out to be excessively complex. “Each visitor to the homepage that day we were spinning up like four sockets. It was really unnecessary. When we thought about how we wanted to do this for the general it was really obvious: you hit the homepage, you load the Pusher library, you load a single connection, it has all of the subscriptions that you need to feed all the components around the page. That’s what we ended up with for the general this year and it worked flawlessly.”

The WebSocket implementation allowed for the complex data to be delivered in a series of comprehensive and accessible dashboards

But the biggest attraction when it came to choosing a trusted partner rather than building in house? Support. This was their Super Bowl, the biggest event they would cover for the foreseeable future, and having a faultless service was non-negotiable.

To guarantee the support coverage required for election day, The Washington Post opted to use Pusher’s Major Event Support package. “We spend a lot of time thinking about disaster recovery scenarios. For every partnership we have we need the ability to have that instantaneous communication.That’s really rare because the model for election night is so odd when it comes to support. Most of the time the way that support would work is that we would file a ticket and in five minutes we would get somebody back and we’d have 24 hour coverage. On election night it’s not like we need 99% coverage. We need 100% coverage.”

Jeremy’s team was supported 24/7 throughout the election coverage by Pusher’s engineers, who monitored traffic and latency. Instant communication was available via a Slack channel. The live support was a win for The Washington Post team top to bottom. “The people at my level and above, they care about risk aversion. How do we minimise risk? How do we make sure that there are folks available in the event that something bad happens. The folks at my level and below, they care about developer experience. We were very lucky that we got to have both of those things. It feels relatively rare that you can have a very solid developer experience and a very high level of work against risk tolerance.”

Over the course of 6 days The Washington Post broke Channels records with over 37 million new connections opened, reaching nearly 20 billion sent messages per day at the peak. The team is now excited about potential expansion of WebSockets across the site.

“The big thing we do is liveness. It’s a relatively new area of expansion for us. TV networks are used to it. Newspapers are only because of the web. We used to have to print this stuff off on trees so it’s a little more difficult. I can see sporting events being a big deal. I can see live blogs and other things like that being a big deal. Chats too. We are constantly keeping an eye out for new ways to have live interactions with our readers.”

Now that the team has offered a proof of concept to the rest of the organization, the options for live news implementations that The Washington Post can deliver in realtime are limitless.

Want to deliver engaging live data updates to your own users with Pusher dashboards? Learn how to build a dynamic realtime poll results app using Channels and Beams or find out more about delivering realtime results with Pusher.