Testing SEO in React apps using Fetch as Google

Learn how to quickly test the SEO performance of your React apps using Fetch as Google. This tutorial provides a sample app to work with, and walks you through using the Fetch as Google search console.

Introduction

Search engine optimization (SEO) is an important factor to consider when building an application. Obviously, you want your page to be the leader of the pack when users come searching. The general belief among SEO experts is that you should implement server-side rendering on your page. This way Google’s (the most popular search engine right now) web crawling bot can have access to your HTML code and index it.

Unfortunately, server-side rendering has proven that it’s not a “one size fits all” solution — your backend could already be built and it’s not running Node.

Other solutions include using a pre-rendering service or a framework such as Next.js, which is used to scaffold React apps and provides readily usable tools for server-side rendering such as setting HTML tags for SEO and fetching data before rendering components during the building process.

Although certain studies have shown that Googlebot is smarter than we think and that it’s okay to implement client-side rendered layout for our React applications. It would even be a smarter decision to ensure all boxes are ticked and know if Googlebot can see your React components — and if it can’t, how to make it do so.

A little testing always helps…

Although Googlebot can crawl all over client rendered React applications, it’s best to be cautious and be able to test your site for the presence or absence of web crawlers. Fortunately, there’s a tool for that already — Google’s Fetch as Google tool enables you to test how Google crawls or renders a URL on your site. Its main function is to simulate a crawl and render execution as done in Google’s normal crawling and rendering process.

You can use Fetch as Google to accomplish the following:

- Know if Googlebot can access a page on your site

- Know how Googlebot renders the pages on your site

- Know if any page resources such as images or scripts are blocked to Googlebot

To use Fetch as Google, you will need a React web app which is deployable to a publicly accessible URL. You can check out and fork the Github repository of the React app I created for this project here.

For deploying, Heroku will work just be fine. If you’re having issues with deploying your app to Heroku, you can check out this short and detailed guide written by Alex Gvozden here.

Basically here’s what our application will look like:

1class App extends Component { 2 render() { 3 return ( 4 <div> 5 <h1>Googlebot will always crawl</h1> 6 </div> 7 ); 8 } 9 }

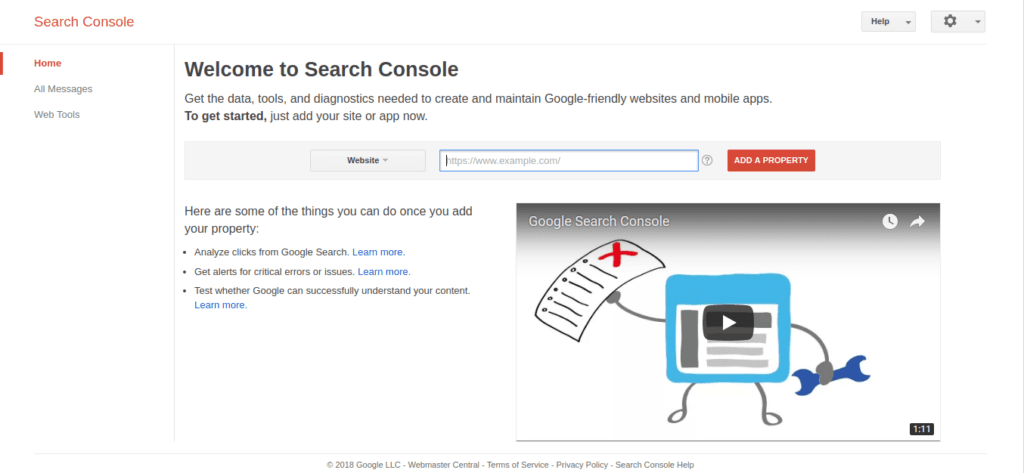

To use the Fetch as Google tool, visit Google Search Console — you’ll need a Google Account to have access to both tools. Here’s an overview of what Google Search Console looks like:

You will be prompted for a website by the Search Console. Our React web app is hosted at https://react-seo-app.herokuapp.com/ . Input your website URL and then click on the Add a Property button.

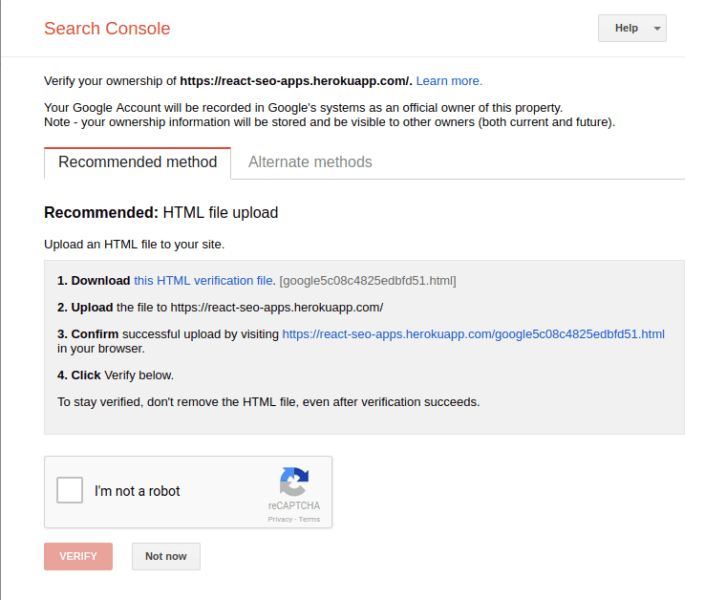

At this point, the Search Console asks you to verify the URL that you would like to test. To verify the URL, follow the steps laid down in the recommended method:

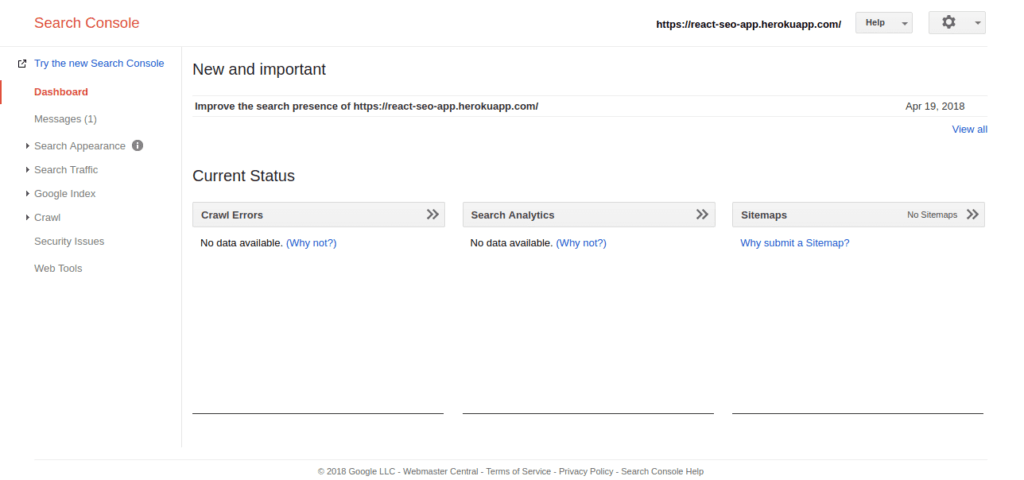

After verifying your URL, you should see a menu similar to this:

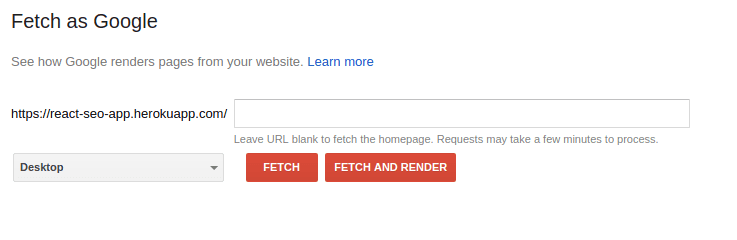

Navigate to the side bar and click on the Crawl option, you should see Fetch as Google:

Using Fetch as Google, you can test certain links attached to your website by specifying them in the text box. Let’s say we’ve got a /products page that we want to test, we could input /products in the text box. Leaving it blank will test the index page of our website.

Fetch as Google lets you test your website using two different modes:

Fetch: this lets you fetch a specified URL in your site and displays the HTTP response. Fetch does not request or run any associated resources such as images or scripts on the page.

Fetch and Render: this option fetches a specified URL in your site, displays the HTTP response and also renders the page. It requests as well as runs all resources on the page.

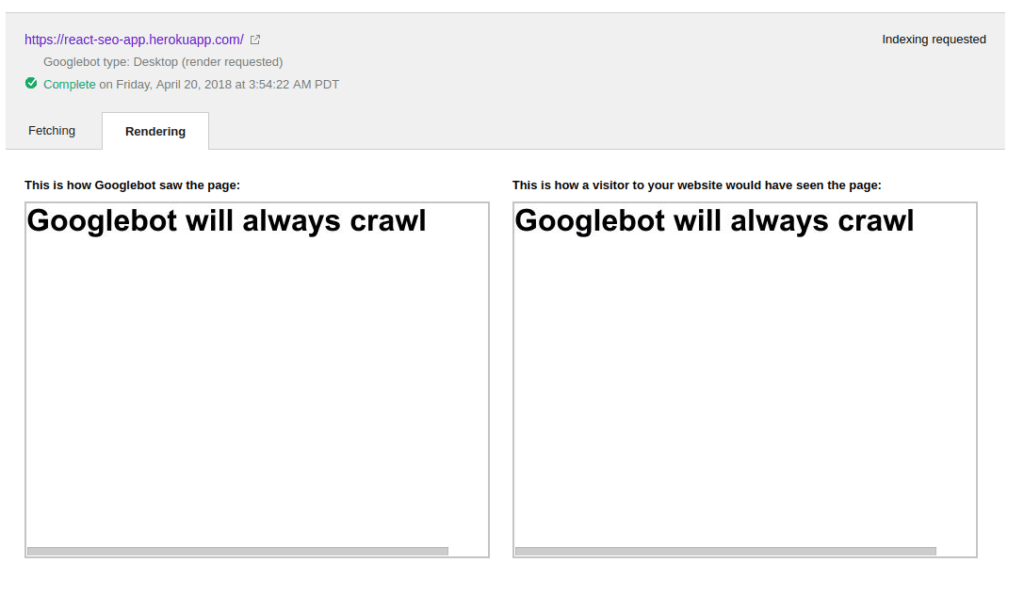

We will be using the Fetch and Render option to detect the difference between how Googlebot sees your page and how a user sees that same page. When we run Fetch and Render on our test React web app, here’s the result:

In the example above, Googlebot can see exactly what visitors to your website would see in their browser which is a good thing. However, some websites that are tested with Fetch by Google will return a different or blank output under the “This is how Googlebot saw the page” section. This could probably be as a result of the way the site was designed.

The implication of this is that Google and potentially other search engines will be unable to read your site and thus you could be losing out on SEO. Googlebot could be unable to crawl your site properly due to content that loads too slowly. Asynchronous calls like AJAX and setTimeout are not allowed to finish before Googlebot crawls and renders your site.

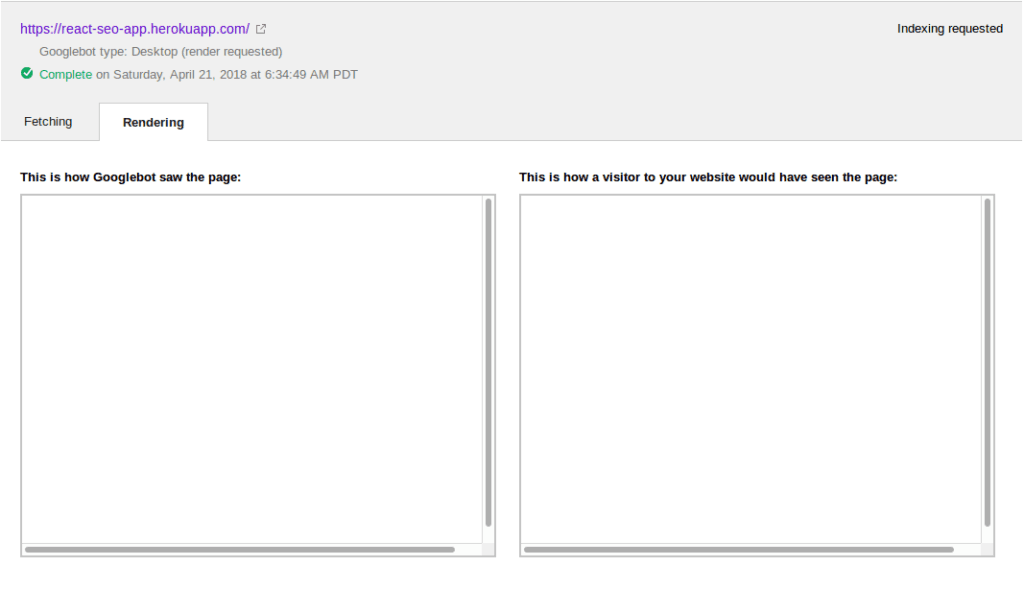

How can we tell? There’s only one way to find out. In our React app let’s make some modifications and test once more using Fetch as Google. We’ll use a setTimeout to slow down things a bit in our app and make it display the “Googlebot will always crawl” message after say, 20 seconds:

1class App extends React.Component { 2 constructor() { 3 super(); 4 this.state = { message: "" }; 5 } 6 componentDidMount() { 7 setTimeout(() => { 8 this.setState({ 9 message: "Googlebot will always crawl" 10 }) 11 }, 20000); 12 } 13 render() { 14 return ( 15 <div> 16 <h1>{ this.state.message }</h1> 17 </div> 18 ) 19 } 20 } 21 export default App;

After the setTimeout was implemented, this was the output:

Obviously, it’s clear that Googlebot doesn’t wait for things that take too long to load.

Conclusion — What you should do

Google has the ability to crawl even “heavy” React sites quite effectively. However, you have to build your application in such a way that it loads important stuff that you would want Googlebot to crawl when your app loads. Stuff to take note of include:

- Rendering your page on the server so it can load immediately. You can learn more about that using my article on server side React here

- Making asynchronous calls only after you’ve rendered your components

- Testing each of your pages using Fetch as Google to ensure that Googlebot is finding your content

- If your website is heavily going to rely on search engine indexing, I suggest you render content on the server. This way, you always sure that whatever the server has, the search engine will index.